Tag Archives: Agile

Cookbook: Maven Source Code Samples

Our Git repository contains an extensive collection of various code examples for Apache Maven projects. Everything is clearly organized by topic.

Back to table of contents: Apache Maven Master Class

- Token Replacement

- Compiler Warnings

- Excecutable JAR Files

- Enforcments

- Unit & Integration Testing

- Multi Module Project (JAR / WAR)

- BOM – Bill Of Materials (Dependency Management)

- Running ANT Tasks

- License Header – Plugin

- OWASP

- Profiles

- Maven Wrapper

- Shade Ueber JAR (Plugin)

- Java API Documantation (JavaDoc)

- Java Sources & Test Case packaging into JARs

- Docker

- Assemblies

- Maven Reporting (site)

- Flatten a POM

- GPG Signer

Vibe coding – a new plague of the internet?

When I first read the term vibe coding, I first thought of headphones, chill music and transitioning into flow. The absolute state of creativity that programmers chase. A rush of productivity. But no, it became clear to me quite quickly that it was about something else.

Vibe coding is the name given to what you enter into an AI via the prompt in order to get a usable program. The output of the Large Language Model (LLM) is not yet the executable program, but rather just the corresponding source text in the programming language that the Vibe Coder specifies. Therefore, depending on which platform it is on, the Vibe Coder still needs the ability to make the whole thing work.

Since I’ve been active in IT, the salespeople’s dream has been there: You no longer need programmers to develop applications for customers. So far, all approaches of this kind have been less than successful, because no matter what you did, there was no solution that worked completely without programmers. A lot has changed since the general availability of AI systems and it is only a matter of time before LLM systems such as Copilot etc. also deliver executable applications.

The possibilities that Vibe Coding opens up are quite remarkable if you know what you are doing. Straight from Goethe’s sorcerer’s apprentice, who was no longer able to master the spirits he summoned. Are programmers now becoming obsolete? In the foreseeable future, I don’t think the programming profession will die out. But a lot will change and the requirements will be very high.

I can definitely say that I am open to AI assistance in programming. However, my experiences so far have taught me to be very careful about what the LLMs suggest as a solution. Maybe it’s because my questions were very specific and for specific cases. The answers were occasionally a pointer in a possible direction that turned out to be successful. But without your own specialist knowledge and experience, all of the AI’s answers would not have been usable. Justifications or explanations should also be treated with caution in this context.

There are now various offers that want to teach people how to use artificial intelligence. So in plain language, how to formulate a functioning prompt. I think such offers are dubious, because the LLMs were developed to understand natural (human) language. So what should you learn to formulate complete and understandable sentences?

Anyone who creates an entire application using Vibe Coding must test it extensively. So click through the functions and see if everything works as it should. This can turn into a very annoying activity that becomes more annoying with each run.

The use of programs created by Vibe Coding is also unproblematic as long as they run locally on your own computer and are not freely accessible as a commercial Internet service. Because this is exactly where the danger lurks. The programs created by Vibe Coding are not sufficiently protected against hacker attacks, which is why they should only be operated in closed environments. I can also well imagine that in the future the use of programs that are Vibe Coded will be prohibited in security-critical environments such as authorities or banks. As soon as the first cyber attacks on company networks through Vibe coding programs become known, the bans are in place.

Besides the question of security for Vibe Coding applications, modifications and extensions will be extremely difficult to implement. This phenomenon is well-known in software development and occurs regularly with so-called legacy applications. As soon as you hear that something has grown organically over time, you’re already in the thick of it. A lack of structure and so-called technical debt cause a project to erode over time to such an extent that the impact of changes on the remaining functionality becomes very difficult to assess. It is therefore likely that there will be many migration projects in the future to convert AI-generated codebases back into clean structures. For this reason, Vibe Coding is particularly suitable for creating prototypes to test concepts.

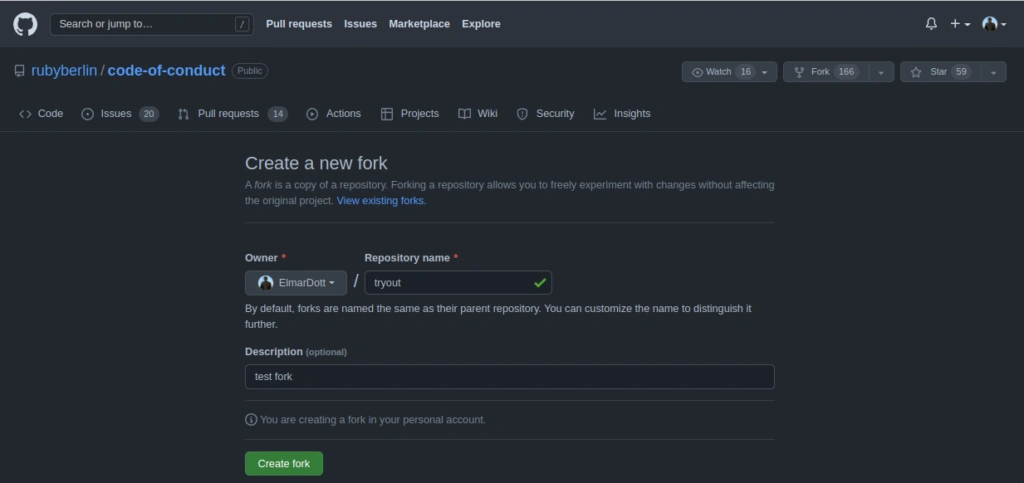

There are now also complaints in open source projects that every now and then there are contributions that convert almost half of the code base and add faulty functions. Of course, common sense and the many standards established in software development help here. It’s not like we haven’t had experience with bad code commits in open source before. This gave rise to the dictatorship workflow for tools like Git, which was renamed Pull Request by the code hosting platform GitHub.

So how can you quickly identify bad code? My current prescription is to check test coverage for added code. No testing, no code merge. Of course, test cases can also be Vibe Coded or lack necessary assertions, which can now also be easily recognized automatically. In my many years in software development projects, I’ve experienced enough that no Vibe Coder can even come close to bringing beads of sweat to my forehead.

My conclusion on the subject of Vibe Coding is: In the future, there will be a shortage of capable programmers who will be able to fix tons of bad production code. So it’s not a dying profession in the foreseeable future. On the other hand, a few clever people will definitely script together a few powerful isolated solutions for their own business with simple IT knowledge that will lead to competitive advantages. As we experience this transformation, the Internet will continue to become cluttered and the gems Weizenbaum once spoke of will become harder to find.

Featureitis

You don’t have to be a software developer to recognize a good application. But from my own experience, I’ve often seen programs that were promising and innovative at the start mutate into unwieldy behemoths once they reach a certain number of users. Since I’ve been making this observation regularly for several decades now, I’ve wondered what the reasons for this might be.

The phenomenon of software programs, or solutions in general, becoming overloaded with details was termed “featuritis” by Brooks in his classic book, “The Mythical Man-Month.” Considering that the first edition of the book was published in 1975, it’s fair to say that this is a long-standing problem. Perhaps the most famous example of featureitis is Microsoft’s Windows operating system. Of course, there are countless other examples of improvements that make things worse.

The phenomenon of software programs, or solutions in general, becoming overloaded with details is what Brooks called “featuritis.” Windows users who were already familiar with Windows XP and then confronted with its wonderful successor Vista, only to be appeased again by Windows 7, and then nearly had a heart attack with Windows 8 and 8.1, were calmed down again at the beginning of Windows 10. At least for a short time, until the forced updates quickly brought them back down to earth. And don’t even get me started on Windows 11. The old saying about Windows was that every other version is junk and should be skipped. Well, that hasn’t been true since Windows 7. For me, Windows 10 was the deciding factor in completely abandoning Microsoft, and like many others, I bought a new operating system. Some switched to Apple, and those who couldn’t afford or didn’t want the expensive hardware, like me, opted for a Linux system. This shows how a lack of insight can quickly lead to the loss of significant market share. Since Microsoft isn’t drawing any conclusions from these developments, this fact seems to be of little concern to the company. For other companies, such events can quickly push them to the brink of collapse, and beyond.

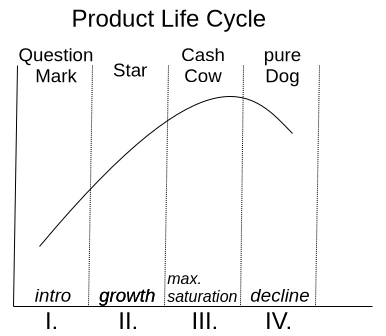

One motivation for adding more and more features to an existing application is the so-called product life cycle, which is represented by the BCG matrix in Figure 1.

With a product’s launch, it’s not yet certain whether it will be accepted by the market. If users embrace it, it quickly rises to stardom and reaches its maximum market position as a cash cow. Once market saturation is reached, it degrades to a slow seller. So far, so good. Unfortunately, the prevailing management view is that if no growth is generated compared to the previous quarter, market saturation has already been reached. This leads to the nonsensical assumption that users must be forced to accept an updated version of the product every year. Of course, the only way to motivate a purchase is to print a bulging list of new features on the packaging.

Since well-designed features can’t simply be churned out on an assembly line, a redesign of the graphical user interface is thrown in as a free bonus every time. Ultimately, this gives the impression of having something completely new, as it requires a period of adjustment to discover the new placement of familiar functions. It’s not as if the redesign actually streamlines the user experience or increases productivity. The arrangement of input fields and buttons always seems haphazardly thrown together.

But don’t worry, I’m not calling for an update boycott; I just want to talk about how things can be improved. Because one thing is certain: thanks to artificial intelligence, the market for software products will change dramatically in just a few years. I don’t expect complex and specialized applications to be produced by AI algorithms anytime soon. However, I do expect that these applications will have enough poorly generated AI-generated code sequences, which the developer doesn’t understand, injected into their codebases, leading to unstable applications. This is why I’m rethinking clean, handcrafted, efficient, and reliable software, because I’m sure there will always be a market for it.

I simply don’t want an internet browser that has mutated into a communication hub, offering chat, email, cryptocurrency payments, and who knows what else, in addition to simply displaying web pages. I want my browser to start quickly when I click something, then respond quickly and display website content correctly and promptly. If I ever want to do something else with my browser, it would be nice if I could actively enable this through a plugin.

Now, regarding the problem just described, the argument is often made that the many features are intended to reach a broad user base. Especially if an application has all possible options enabled from the start, it quickly engages inexperienced users who don’t have to first figure out how the program actually works. I can certainly understand this reasoning. It’s perfectly fine for a manufacturer to focus exclusively on inexperienced users. However, there is a middle ground that considers all user groups equally. This solution isn’t new and is very well-known: the so-called product lines.

In the past, manufacturers always defined target groups such as private individuals, businesses, and experts. These user groups were then often assigned product names like Home, Enterprise, and Ultimate. This led to everyone wanting the Ultimate version. This phenomenon is called Fear Of Missing Out (FOMO). Therefore, the names of the product groups and their assigned features are psychologically poorly chosen. So, how can this be done better?

An expert focuses their work on specific core functions that allow them to complete tasks quickly and without distractions. For me, this implies product lines like Essentials, Pure, or Core.

If the product is then intended for use by multiple people within the company, it often requires additional features such as external user management like LDAP or IAM. This specialized product line is associated with terms like Enterprise, Company, Business, and so on.

The cluttered end result, actually intended for NOOPS, has all sorts of things already activated during installation. If people don’t care about the application’s startup and response time, then go for it. Go all out. Throw in everything you can! Here, names like Ultimate, Full, and Maximized Extended are suitable for labeling the product line. The only important thing is that professionals recognize this as the cluttered version.

Those who cleverly manage these product lines and provide as many functions as possible via so-called modules, which can be installed later, enable high flexibility even in expert mode, where users might appreciate the occasional additional feature.

If you install tracking on the module system beforehand to determine how professional users upgrade their version, you’ll already have a good idea of what could be added to the new version of Essentials. However, you shouldn’t rely solely on downloads as the decision criterion for this tracking. I often try things out myself and delete extensions faster than the installation process took if I think they’re useless.

I’d like to give a small example from the DevOps field to illustrate the problem I just described. There’s the well-known GitLab, which was originally a pure code repository hosting project. The name still reflects this today. An application that requires 8 GB of RAM on a server in its basic installation just to make a Git repository accessible to other developers is unusable for me, because this software has become a jack-of-all-trades over time. Slow, inflexible, and cluttered with all sorts of unnecessary features that are better implemented using specialized solutions.

In contrast to GitLab, there’s another, less well-known solution called SCM-Manager, which focuses exclusively on managing code repositories. I personally use and recommend SCM-Manager because its basic installation is extremely compact. Despite this, it offers a vast array of features that can be added via plugins.

I tend to be suspicious of solutions that claim to be an all-in-one solution. To me, that’s always the same: trying to do everything and nothing. There’s no such thing as a jack-of-all-trades, or as we say in Austria, a miracle worker!

When selecting programs for my workflow, I focus solely on their core functionality. Are the basic features promised by the marketing truly present and as intuitive as possible? Is there comprehensive documentation that goes beyond a simple “Hello World”? Does the developer focus on continuously optimizing core functions and consider new, innovative concepts? These are the questions that matter to me.

Especially in commercial environments, programs are often used that don’t deliver on their marketing promises. Instead of choosing what’s actually needed to complete tasks, companies opt for applications whose descriptions are crammed with buzzwords. That’s why I believe that companies that refocus on their core competencies and use highly specialized applications for them will be the winners of tomorrow.

Mismanagement and Alpha Geeks

When I recently picked up Camille Fournier’s book “The Manager’s Path,” I was immediately reminded of Tom DeMarco. He wrote the classic “Peopleware” and, in the early 2000s, published “Adrenaline Junkies and Form Junkies.” It’s a list of stereotypes you might encounter in software projects, with advice on how to deal with them. After several decades in the business, I can confirm every single word from my own experience. And it’s still relevant today, because people are the ones who make projects, and we all have our quirks.

For projects to be successful, it’s not just technical challenges that need to be overcome. Interpersonal relationships also play a crucial role. One important factor in this context, which often receives little attention, is project management. There are shelves full of excellent literature on how to become a good manager. The problem, unfortunately, is that few who hold this position actually fulfill it, and even fewer are interested in developing their skills further. The result of poor management is worn-down and stressed teams, extreme pressure in daily operations, and often also delayed delivery dates. It’s no wonder, then, that this impacts product quality.

One of the first sayings I learned in my professional life was: “Anyone who thinks a project manager actually manages projects also thinks a butterfly folds lemons.” So it seems to be a very old piece of wisdom. But what is the real problem with poor management? Anyone who has to fill a managerial position has a duty to thoroughly examine the candidate’s skills and suitability. It’s easy to be impressed by empty phrases and a list of big names in the industry on a CV, without questioning actual performance. In my experience, I’ve primarily encountered project managers who often lacked the necessary technical expertise to make important decisions. It wasn’t uncommon for managers in IT projects to dismiss me with the words, “I’m not a technician, sort this out amongst yourselves.” This is obviously disastrous when the person who’s supposed to make the decisions can’t make them because they lack the necessary knowledge. An IT project manager doesn’t need to know which algorithm will terminate the project faster. Evaluations can be used to inform decisions. However, a basic understanding of programming is essential. Anyone who doesn’t know what an API is and why version compatibility prevents modules that will later be combined into a software product from working together has no right to act as a decision-maker. A fundamental understanding of software development processes and the programming paradigms used is also indispensable for project managers who don’t work directly with the code.

I therefore advocate for vetting not only the developers you hire for their skills, but also the managers who are to be brought into a company. For me, external project management is an absolute no-go when selecting my projects. This almost always leads to chaos and frustration for everyone involved, which is why I reject such projects. Managers who are not integrated into the company and whose performance is evaluated based on project success, in my experience, do not deliver high-quality work. Furthermore, internal managers, just like developers, can develop and expand their skills through mentoring, training, and workshops. The result is a healthy, relaxed working environment and successful projects.

The title of this article points to toxic stereotypes in the project business. I’m sure everyone has encountered one or more of these stereotypes in their professional environment. There’s a lot of discussion about how to deal with these individuals. However, I would like to point out that hardly anyone is born a “monster.” People are the way they are, a result of their experiences. If a colleague learns that looking stressed and constantly rushed makes them appear more productive, they will perfect this behavior over time.

Camille Fournier aptly described this with the term “The Alpha Geek.” Someone who has made their role in the project indispensable and has an answer for everything. They usually look down on their colleagues with disdain, but can never truly complete a task without others having to redo it. Unrealistic estimates for extensive tasks are just as typical as downplaying complex issues. Naturally, this is the darling of all project managers who wish their entire team consisted of these “Alpha Geeks.” I’m quite certain that if this dream could come true, it would be the ultimate punishment for the project managers who create such individuals in the first place.

To avoid cultivating “alpha geeks” within your company, it’s essential to prevent personality cults and avoid elevating personal favorites above the rest of the team. Naturally, it’s also crucial to constantly review work results. Anyone who marks a task as completed but requires rework should be reassigned until the result is satisfactory.

Personally, I share Tom DeMarco’s view on the dynamics of a project. While productivity can certainly be measured by the number of tasks completed, other factors also play a crucial role. My experience has taught me that, as mentioned earlier, it’s essential to ensure employees complete all tasks thoroughly and thoroughly before taking on new ones. Colleagues who downplay a task or offer unrealistic, low-level assessments should be assigned that very task. Furthermore, there are colleagues who, while having relatively low output, contribute significantly to team harmony.

When I talk about people who build a healthy team, I don’t mean those who simply hand out sweets every day. I’m referring to those who possess valuable skills and mentor their colleagues. These individuals typically enjoy a high level of trust within the team, which is why they often achieve excellent results as mediators in conflicts. It’s not the people who try to be everyone’s darling with false promises, but rather those who listen and take the time to find a solution. They are often the go-to people for everything and frequently have a quiet, unassuming demeanor. Because they have solutions and often lend a helping hand, they themselves receive only average performance ratings in typical process metrics. A good manager quickly recognizes these individuals because they are generally reliable. They are balanced and appear less stressed because they proceed calmly and consistently.

Of course, much more could be said about the stereotypes in a software project, but I think the points already made provide a good basic understanding of what I want to express. An experienced project manager can address many of the problems described as they arise. This naturally requires solid technical knowledge and some interpersonal skills.

Of course, we must also be aware that experienced project managers don’t just appear out of thin air. They need to be developed and supported, just like any other team member. This definitely includes rotations through all technical departments, such as development, testing, and operations. Paradigms like pair programming are excellent for this. The goal isn’t to turn a manager into a programmer or tester, but rather to give them an understanding of the daily processes. This also strengthens confidence in the skills of the entire team, and mentalities like “you have to control and push lazy and incompetent programmers to get them to lift a finger” don’t even arise. In projects that consistently deliver high quality and meet their deadlines, there’s rarely a desire to introduce every conceivable process metric.

RTFM – usable documentation

An old master craftsman used to say: “He who writes, remains.” His primary intention was to obtain accurate measurements and weekly reports from his journeymen. He needed this information to issue correct invoices, which was crucial to the success of his business. This analogy can also be readily applied to software development. It wasn’t until Ruby, the programming language developed in Japan by Yukihiro Matsumoto, had English-language documentation that Ruby’s global success began.

We can see, therefore, that documentation can be of considerable importance to the success of a software project. It’s not simply a repository of information within the project where new colleagues can find necessary details. Of course, documentation is a rather tedious subject for developers. It constantly needs to be kept up-to-date, and they often lack the skills to put their own thoughts down on paper in a clear and organized way for others to understand.

I myself first encountered the topic of documentation many years ago through reading the book “Software Engineering” by Johannes Siedersleben. Ed Yourdon was quoted there as saying that before methods like UML, documentation often took the form of a Victorian novella. During my professional life, I’ve also encountered a few such Victorian novellas. The frustrating thing was: after battling through the textual desert—there’s no other way to describe the feeling than as overcoming and struggling—you still didn’t have the information you were looking for. To paraphrase Goethe’s Faust: “So here I stand, poor fool, no wiser than before.”

Here we already see a first criticism of poor documentation: inappropriate length and a lack of information. We must recognize that writing isn’t something everyone is born with. After all, you became a software developer, not a book author. For the concept of “successful documentation,” this means that you shouldn’t force anyone to do it and instead look for team members who have a knack for it. This doesn’t mean, however, that everyone else is exempt from documentation tasks. Their input is essential for quality. Proofreading, pointing out errors, and suggesting additions are all necessary tasks that can easily be shared.

It’s highly advisable to provide occasional rhetorical training for the team or individual team members. The focus should be on precise, concise, and understandable expression. This also involves organizing your thoughts so they can be put down on paper and follow a clear and coherent structure. The resulting improved communication has a very positive impact on development projects.

Up-to-date documentation that is easy to read and contains important information quickly becomes a living document, regardless of the format chosen. This is also a fundamental concept for successful DevOps and agile methodologies. These paradigms rely on good information exchange and address the avoidance of information silos.

One point that really bothers me is the statement: “Our tests are the documentation.” Not all stakeholders can program and are therefore unable to understand the test cases. Furthermore, while tests demonstrate the behavior of functions, they don’t inherently demonstrate their correct usage. Variations of usable solutions are also usually missing. For test cases to have a documentary character, it’s necessary to develop specific tests precisely for this purpose. In my opinion, this approach has two significant advantages. First, the implementation documentation remains up-to-date, because changes will cause the test case to fail. Another positive effect is that the developer becomes aware of how their implementation is being used and can correct a flawed design in a timely manner.

Of course, there are now countless technical solutions that are suitable for different groups of people, depending on their perspective on the system. Issue and bug tracking systems, such as the commercial JIRA or the open-source Redmine, map entire processes. They allow testers to assign identified problems and errors in the software to a specific release version. Project managers can use release management to prioritize fixes, and developers document the implemented fixes. That’s the theory. In practice, I’ve seen in almost every project how the comment function in these systems is misused as a chat to describe the change status. The result is a bug report with countless useless comments, and any real, relevant information is completely missing.

Another widespread technical solution in development projects is the use of enterprise wikis. They enhance simple wikis with navigation and allow the creation of closed spaces where only explicitly authorized user groups receive granular permissions such as read or write. Besides the widely used commercial solution Confluence, there’s also a free alternative called BlueSpice, which is based on MediaWiki. Wikis allow collaborative work on a document, and individual pages can be exported as PDFs in various formats. To ensure that the wiki pages remain usable, it’s important to maintain clean and consistent formatting. Tables should fit their content onto a single A4 page without unwanted line breaks. This improves readability. There are also many instances where bulleted lists are preferable to tables for the sake of clarity.

This brings us to another very sensitive topic: graphics. It’s certainly true that a picture is often worth a thousand words. But not always! When working with graphics, it’s important to be aware that images often require a considerable amount of time to create and can often only be adapted with significant effort. This leads to several conclusions to make life easier. A standard program (format) should be used for creating graphics. Expensive graphics programs like Photoshop and Corel should be avoided. Graphics created for wiki pages should be attached to the wiki page in their original, editable form. A separate repository can also be set up for this purpose to allow reuse in other projects.

If an image offers no added value, it’s best to omit it. Here’s a small example: It’s unnecessary to create a graphic depicting ten stick figures with a role name or person underneath. Here, it is advisable to create a simple list, which is also easier to supplement or adapt.

But you should also avoid overloaded graphics. True to the motto “more is better,” overly detailed information tends to cause confusion and can lead to misinterpretations. A recommended book is “Documenting and Communicating Software Architectures” by Stefan Zörner. In this book, he effectively demonstrates the importance of different perspectives on a system and which groups of people are addressed by a specific viewpoint. I would also like to take this opportunity to share his seven rules for good documentation:

- Write from the reader’s perspective.

- Avoid unnecessary repetition.

- Avoid ambiguity; explain notation if necessary.

- Use standards such as UML.

- Include the reasons (why).

- Keep the documentation up-to-date, but never too up-to-date.

- Review the usability.

Anyone tasked with writing the documentation, or ensuring its progress and accuracy, should always be aware that it contains important information and presents it correctly and clearly. Concise and well-organized documentation can be easily adapted and expanded as the project progresses. Adjustments are most successful when the affected area is as cohesive as possible and appears only once. This centralization is achieved through references and hyperlinks, so that changes in the original document are reflected in the references.

Of course, there is much more to say about documentation; it’s the subject of numerous books, but that would go beyond the scope of this article. My main goal was to raise awareness of this topic, as paradigms like Agile and DevOps rely on a good flow of information.

BugChaser – The limits of test coverage

The paradigms now established in software engineering, such as Test-Driven Development (TDD) and Behavior-Driven Development (BDD), along with correspondingly easy-to-use tools, have opened up a new, pragmatic perspective on the topic of software testing. Automated tests are a crucial factor in commercial software projects. Therefore, in this context, a successful testing strategy is one in which test execution proceeds without human intervention.

Test automation forms the basis for achieving stability and reducing risk in critical tasks. Such critical tasks include, in particular, refactoring, maintenance, and bug fixes. All these activities share one common goal: preventing new errors from creeping into the code.

In his 1972 article “The Humble Programmer,” Edsger W. Dijkstra stated the following:

„Program testing can be a very effective way to show the presence of bugs, but is hopelessly inadequate for showing their absence.“

Therefore, simply automating test execution is not sufficient to ensure that changes to the codebase do not have unintended effects on existing functionality. For this reason, the quality of the test cases must be evaluated. Proven tools already exist for this purpose.

Before we delve deeper into the topic, let’s first consider what automated testing actually means. This question is quite easy to answer. Almost every programming language has a corresponding unit test framework. Unit tests call a method with various parameters and compare the return value with an expected value. If both values match, the test is considered passed. Additionally, it can also be checked whether an exception was thrown.

If a method has no return value or does not throw an error, it cannot be tested directly. Methods marked as private, or inner classes, are also not easily testable, as they cannot be called directly. These must be tested indirectly through public methods that call the ‘hidden’ methods.

When dealing with methods marked as private, it is not an option to access and test the functionality they represent using techniques such as the Reflection API. We must be aware that such methods are often also used to encapsulate code fragments to avoid duplication.

public boolean method() {

boolean success = false;

List collector = new ArryList();

collector.add(1);

collector.add(2);

collector.add(3);

sortAsc(collector);

if(collector.getFirst().equals(1)) {

success = true;

}

return success;

}

private void sortAsc(List collection) {

collection.sort(

(a, b) -> {

return -1 * a.compareTo(b);

});

}Therefore, to effectively write automated tests, it is necessary to follow a certain coding style. The preceding Listing 1 simply demonstrates what testable code can look like.

Since developers also write the corresponding component tests for their own implementations, the problem of difficult-to-test code is largely eliminated in projects that follow a test-driven approach. The motivation to test now lies with the developer, as this paradigm allows them to determine whether their implementation behaves as intended. However, we must ask ourselves: Is that all we need to do to develop good and stable software?

As we might expect with such questions, the answer is no. An essential tool for evaluating the quality of tests is achieving the highest possible test coverage. A distinction is made between branch and line coverage. To illustrate the difference more clearly, let’s briefly look at the pseudocode in Listing 2.

if( Expression-A OR Expression-B ) {

print(‘allow‘);

} else {

print(‘decline‘);

}

Our goal is to execute every line of code if possible. To achieve this, we already need two separate test cases: one for entering the IF branch and one for entering the ELSE branch. However, to achieve 100% branch coverage, we must cover all variations of the IF branch. In this example, that means one test that makes Expression A true, and another test that makes Expression B true. This results in a total of three different test cases.

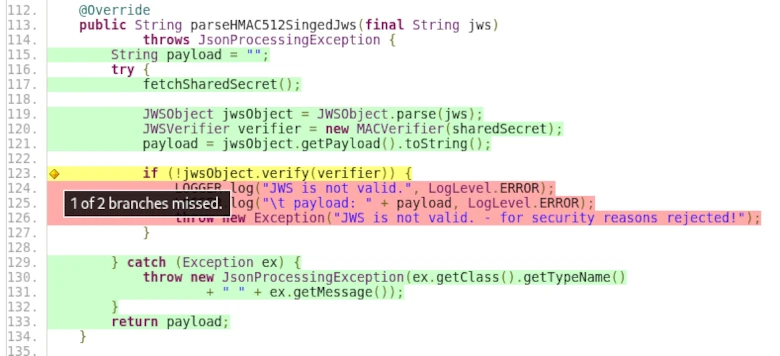

The screenshot from the TP-CORE project shows what such test coverage can look like in ‘real-world’ projects.

Of course, this example is very simple, and in real life, there are often constructs where, despite all efforts, it’s impossible to reach all lines or branches. Exceptions from third-party libraries that need to be caught but cannot be triggered under normal circumstances are a typical example.

For this reason, while we strive to achieve the highest possible test coverage and naturally aim for 100%, there are many cases where this is not feasible. However, a test coverage of 90% is quite achievable. The industry standard for commercial projects is 85% test coverage. Based on these observations, we can say that test coverage correlates with the testability of an application. This means that test coverage is a suitable measure of testability.

However, it must also be acknowledged that the test coverage metric has its limitations. Regular expressions and annotations for data validation are just a few simple examples of where test coverage alone is not a sufficient indicator of quality.

Without going too much into the implementation details, let’s imagine we had to write a regular expression to validate input against a correct 24-hour time format. If we don’t keep the correct interval in mind, our regular expression might be incorrect. The correct interval for the 24-hour format is 00:00 – 23:59. Examples of invalid values are 24:00 or 23:60. If we are unaware of this fact, errors can remain hidden in our application despite test cases, only to surface and cause problems when the application is actually used.

„… In a few cases, participants were unable to think of alternative solutions …“

The question here was whether error correction always represents the optimal solution. Beyond that, it would be necessary to clarify what constitutes an optimal solution in commercial software development projects. The statement that there are cases in which developers only know or understand one way of doing things is very illustrative. This is also reflected in our example of regular expressions (RegEx). Software development is a thought process that cannot be accelerated. Our thinking is determined by our imagination, which in turn is influenced by our experience.

This already shows us another example of sources of errors in test cases. A classic example is incorrect comparisons in collections, such as comparing arrays. The problem we are dealing with here is how variables are accessed: call by value or call by reference. With arrays, access is via call by reference, i.e., directly to the memory location. If you now assign an array to a new variable and compare both variables, they will always be the same because you are comparing the array with itself. This is an example of a test case that is essentially meaningless. However, if the implementation is correct, this faulty test case will never cause any problems.

This realization shows us that blindly striving for complete test coverage is not conducive to quality. Of course, it’s understandable that this metric is highly valued by management. However, we have also been able to demonstrate that one cannot rely on it alone. We therefore see that there is also a need for code inspections and refactorings for test cases. Since it’s impossible to read and understand all the code from beginning to end due to time constraints, it’s important to focus on problematic areas. But how can we find these problem areas? A relatively new technique helps us here. The theoretical work on this is already somewhat older; it just took a while for corresponding implementations to become available.

Way out of the Merging-Hell

Abstract: Source Control Management (SCM) tools have a long tradition in the software development process and they inhabit an important part of the daily work in any development team. The first documented type of these systems SCCS appeared in 1975 and was described by Rochkind [1]. Til today a large number of other SCM systems have appeared in centralized or distributed forms. An example of centralized variants is Subversion (SVN) or for distributed solutions Git is a representative. Each new system brings many performance improvements and also a lot of new concepts. In “The History of Version Control” [2], Ruparelia gives an overview of the evolution of various free and commercial SCM systems. However, there is one basic use that all these systems have in common. Branching and merging. As simple as the concept seems: to fork a code baseline into a new branch and merge the changes back together later, for SCM systems is difficult to deal with. Giant pitfalls during branching and merging can cause a huge amount of merge conflicts that cannot be handled manually. This article discusses why and where semantic merge conflicts occur and what techniques can be used to avoid them.

To cite this article: Marco Schulz. Way out of the Merging-Hell. Journal of Research in Engineering and Computer Sciences. February 2024, Vol. 2, No. 1, pp. 28-43 doi: 10.13140/RG.2.2.27559.66727

Download the PDF: https://hspublishing.org/JRECS/article/view/343/295

1. Introduction

When we think about Source Control Management systems and their use, two core functionalities emerge. The most important and therefore the first function to be mentioned is the recording and management of changes to an existing code base. A single code change managed by SCM is called a revision. A revision can consist of any number of changes to only one file or to any number of files. This means a revision is equivalent to a version of the code base. Revisions usually have a ancestor and a descendant and this is forming a directed graph.

The second essential functionality is that SCM systems allow multiple developers to work on the same code base. This means that each developer creates a separate revision for the changes they make. This makes it very easy to track who made a change to a particular file at what time.

Especially the collaborative aspect can become a so-called merging hell if used clumsily. These problems can occur even with a simple linear approach, without further branching. It could happened that locally made changes can’t be integrated due to many semantic conflicts into a new revision. Therefore we discuss in detail in the following section why merge conflicts occur at all.

The term DevOps has been established in the software industry since around 2010. This describes the interaction between development (Dev) and operation (Ops). DevOps is a collection of concepts, methodologies around the software development process to ensure the productivity of the development team. The classical Configuration Management as it among other things in the “SWEBook – Guide to software engineering Body of knowledge” [5] was described is merged like also other special disciplines under the new term DevOps. Software Configuration Management concerns itself from technical view very intensively with the efficient use of SCM systems. This leads us to the Branch Models and from there directly to the next section which will discusses the different Merge strategies.

Another topic is where I examine selected SCM workflows and concepts of repository organization. This point is also an important part of the domain of Configuration Management. Many proven best practices can be described by the theory of expected conflict sets I introduce in last section before the conclusion. This leads to the thesis that the semantic merge conflicts arising in SCM systems are caused by a lack of Continuous Integration (CI) and may could be resolved recursively via partial merges.

2. How merge conflicts arise

If we think about how semantic merge conflicts arise in SCM systems, the pattern that occurs is always the same. The illustration does not require long-term or complex constructions with ramifications.

Even a simple test that can be performed in a few moments shows up the problem. Only one branch is needed, which is called main in a freshly created Git repository. A simple text file with the name test.txt is added to this branch. The file test.txt contains exactly one single line with the following content: “version=1.0-SNAPSHOT”. The text file filled in this way is first committed to the local repository and then pushed to the remote repository. This state describes revision 1 of the test.txt file and is the starting point for the following steps.

A second person now checks out the repository with the main branch to their own system using the clone command. The contents of test.txt are then changed as follows: “version=1.0.0” and transferred to the remote repository again. This gives the test.txt file revision 2.

Meanwhile, person 1 changes the content for test.txt in their own workspace to: “version=1.0.1” and commits the changes to their local repository.

If person 1 tries to push their changes to the shared remote repository, they will first be prompted to pull the changes they made in the meantime from the remote repository to the local repository. When this operation is performed, a conflict arises that cannot be resolved automatically.

Certainly, the remark would be justified at this point that Git is a decentralized SCM. The question arises whether the described attempt in this arrangement can be taken over also for centralized SCM systems? Would the centralized Subversion (SVN) terminate in the same result like the decentralized Git? The answer to this is a clear YES. The major difference between centralized and decentralized SCM systems is that decentralized SCM tools create a copy of the remote repository locally, which is not the case with centralized representatives. Therefore, decentralized solutions need two steps to create a revision in the remote repository, while centralized tools do not need the intermediate step via the local repository.

Before we now turn to the question of why the conflict occurred, let’s take a brief look at Figure 2.02, which once again graphically depicts the scenario in its sequences.

Using the following listing 2.01, the experiment can be recreate independently at any time. It is only important that the sequence of the individual steps is not changed.

//user 1 (ED)

git init -bare

git clone <repository>

touch test.txt >> version=1.0-SNAPSHOT

git add test.txt

git commit -m "create revision 1."

git push <repository>

//user 2 (Elmar Dott)

git clone test.txt

edit test.txt -> version=1.0.0

git commit -m "create revision 2."

git push <repository>

//user 1 (ED)

edit test.txt -> version=1.0.1

git commit -m "create revision 3."

git push <repository>

git pull - ! conflict !Listing 2.01: A test setup for creating a conflict on the command line.

The result of the described experiment is not surprising, because SCM systems are usually line-based. If we now have changes in a file in the same line, automatic algorithms like the 3-way-merge based on the O(ND) Difference Algorithm discussed by Myers [3] cannot make a decision. This is expected, because the change has a semantic meaning that only the author knows. This then leads to the user having to manually intervene to resolve the conflict.

To find a suitable solution for resolving the conflict, there are powerful tools that compare the changes of the two versions. The underlying theoretical work of 2-way-merge can be found, among others, in the paper syntactic software merging [4] by Buffenbarger.

To explore the problem further, we look at the ways in which different versions a file change can arise. Since SCM systems are line-based, we focus on the state that a single line can take:

- unchanged

- modified

- delete / removed

- add

- move

It can already be guessed that moving larger text blocks within a file can also lead to conflicts. Now objections could be raised that such a procedure is rather theoretical nature and has little practical reference. However, I must vehemently contradict this. Since I was confronted with exactly this problem very early in my professional career.

Imagine a graphical editor in which you can create BPMN processes, for example. Such an editor saves the process description in an XML file. So that it can then be processed programmatically. XML as pure ASCII text file can be placed problem-free with a SCM system under Configuration Management. If the graphical editor uses the event driven SAX implementation in Java for XML to edit the XML structure, the changed blocks are usually moved to the end of the context block within the file.

If different blocks within the file are processed simultaneously, conflicts will occur. As a rule, these conflicts cannot be resolved manually with reasonable effort. The solution at that time was a strict coordination between the developers to clarify when the file is released for editing.

In larger teams, which may also work in far distance together, this can lead to massive delays. A simple solution would be to lock the corresponding file so that no editing by another user is possible. However, this way is rather questionable in the long run. Let’s think of a locked file that cannot be processed further because the person in question fell ill at short notice.

It is much more elegant to introduce an automated step that formats such files according to a specified coding guide before a commit. However, care must be taken to strictly preserve the semantics within the file.

Now that we know the mechanisms of how conflicts arise and we can start thinking about a suitable strategy to avoid conflicts if possible. As we have already seen, automated procedures have some difficulties in deciding which change to use. Therefore, concepts should be found to avoid conflicts from the beginning. The goal is to keep the amount of conflicts manageable, so that manual processing can be done quickly, easily and secure.

Since conflicts in day-to-day business mainly occur when merging branches, we turn to the different branch strategies in the following section.

3. Branch models

In older literature, the term branch is often used as a synonym for terms such as stream or tree. In simple terms, a branch is the duplication of an object which can then be further modified in the different versions independently of each other.

Branching the main line into parallel dedicated development branches is one of the most important features of SCM systems that developers are regularly confronted with.

Although the creation of a new branch from any revision is effortless, an ill-considered branch can quickly lead to serious difficulties when merging the different branches later. To get a better grasp of the problem, we will examine the various reasons why it may be necessary to create branches from the main development branch.

A quite broad overview to different branch strategies gives the Git Flow. Before I continue with a detailed explanation, however, I would like to note that Git Flow is not optimally suited for all software development projects because of its complexity. This hint can be found with an explanation for some time on the blog of Vincent Driessen [6], who has described the Git Flow in the article “A successful Git branching model”.

This model was conceived in 2010, now more than 10 years ago, and not very long after Git itself came into being. In those 10 years, git-flow (the branching model laid out in this article) has become hugely popular in many a software team to the point where people have started treating it like a standard of sorts — but unfortunately also as a dogma or panacea. […] This is not the class of software that I had in mind when I wrote the blog post 10 years ago. If your team is doing continuous delivery of software, I would suggest to adopt a much simpler workflow (like GitHub flow) instead of trying to shoehorn git-flow into your team. […]

V. Driessen, 5 March 2020

- Main development branch: current development status of the project. In Subversion this branch is called trunk.

- Developer Branch: isolates the workspace of a developer from the main development branch in order to be able to store as many revisions of their own work as possible without influencing the rest of the team.

- Release Branch: an optional branch that is created when more than one release version is developed at the same time.

- Hotfix Branch: an optional branch that is only created when a correction (Bugfix) has to be made for an existing release. No further development takes place in this branch.

- Feature Branch: parallel development branch to the Main with a life cycle of at least one release cycle in order to encapsulate extensive functionalities.

If you look at the original illustration of Git Flow, you will see branches of branches. It is absolutely necessary to refrain from such a practice. The complexity that arises in this way can only be mastered through strong discipline.

A small detail in the conception of the Git Flow we see also with the idea beside a release Branch additionally a Hotfix Branch to create. Because in most cases the release branch is already responsible for the fixes. Whenever a release that is in production needs to be followed up with a fix, a branch is created from the revision of the corresponding release.

However, the situation changes when multiple release versions are under development. In this case it is highly recommended to keep the release changes always on the major development line and to branch off older releases as post-provisioning. This scenario should be reserved for major releases only, as they will contain API changes using the Semantic Versioning [7] and thus create per se incompatibilities. This strategy help to reduce the complexity of the branch model.

Now, however, for the release branches in which new functionality continues to be implemented, it is necessary to be able to supply the releases that are being created there with corrections. In order to have a distinction here the designation Hotfix Branch is very helpful. This is also reflected in the naming of the branches and is helpful for orientation in the repository.

If the name this branch is something like Hotfix branch, it will block the possibility of making further functional developments in this branch for the release in the future. In principle, branches of level 1 should be named Release_x.x. Branches of level 2 in turn should be called HotFix_x.x or BugFix_x.x etc. This pattern of naming fits in nicely with Semantic Versioning. Branches of a level higher than two should be strictly avoided. On the one hand, this increases the complexity of the repository structure, and on the other hand, it creates considerable effort in the administration and maintenance of subsequent components of an automated build and deploy pipeline.

The following figure puts what has just been described into a visual context. A technical description of Release Management from the perspective of Configuration Management via the creation of branches can be found in the paper “Expressions for Source Control Management Systems” [8], which proposes a vocabulary that helps to improve orientation in source repositories via the commit messages.

Certainly, an experienced configuration manager can correct unfavorably named Branches more or less easily with a little effort, depending on the SCM used. But it is important to remember that systems connected to the SCM, such as automation servers (also known as Build or CI servers), quality assurance tools such as SonarQube, etc., are also affected by such renaming. All this infrastructure and can not longer find the link to the original sources if the name of the branch got changed afterwards. Since this would be a disaster for Release Management, companies often refrain from refactoring the code repositories, which leads to very confusing graphs.

To ensure orientation in the repository, important revisions such as releases should be identified by a tag. This practice ensures that the complexity is not increased unnecessarily. All relevant revisions, so-called points of interests (POI), can be easily found again via a tag.

In contrast to branches, tags can be created arbitrarily in almost any SCM system and removed without leaving any residue. While Git supports the deletion of branches excellently, this is not easily possible with Subversion due to its internal structure.

It is also highly recommended to set an additional tag for releases that are in PRODUCTION. As soon as a release is no longer in production use, the tag that identifies a production release should be deleted. Current labels for releases in production allow you to decide very quickly from which release a resupply is needed.

Particularly in the case of very long-term projects, it is rarely possible to make a correction in the revision in which the error occurs for the first time because of the existing timeline, and it is not exactly sensible. For reasons of cost efficiency, this question is focused exclusively on the releases that are in production. We see that the use of release branches can lead to a very complex structure in the long run. Therefore it is a highly recommended strategy to close release branches that are no longer needed.

For this purpose an additional tag >EOL< can be introduced. EOL indicates that a branch has reached its end of lifetime. These measures visualize the current state of the branches in a repository and help to get a quick overview. In addition, it is also recommended to lock the closed release branches against unintentional changes. Many server solutions such as the SCM Manager or GitLab offer suitable tools for locking branches, directories and individual files. It is also strongly advised against deleting branches that are no longer needed and from which a release was created.

In this section, the different types of branches were introduced in their context. In addition, possibilities were shown how the orientation in the revisions of a repository can be ensured without having to read the source files. The following chapter discusses the various ways in which these branches can be merged.

The motivation of branches from the main development is already discussed in detail. Now it is time to inspect the various options for merging two branches.

4. Merging strategies

After we have discussed in detail the motivation for branches of the main development branch, it is now important to examine the different possibilities of merging two branches.

As I already demonstrated in the previous Chapter, conflicts can easily arise in the different versions of a file when merging, even in a linear progression. In this case it is a temporary branch that is immediately merged into a new revision as automatically as possible. If the automated merging fails, it is a semantic conflict that must be resolved manually. With clumsily chosen branch models, the number of such conflicts can be increased so massively that manual merging is no longer possible.

In Figure 4.01 we see the graph of the history of TortoisGit from the example of Listing 2. Although there is no additional branch at this point, we can see branches in the column of the graph. It is a representation of the different versions, which can continue to grow as more developers are involved.

A directed graph is therefore created for each object over time. If this object is frequently affected by edits due to its importance in the project, which are also made by different people, the complexity of the associated graph automatically increases. This effect is amplified if several branches have been created for this object.

The current version of the SCM tool Git supports three different merge strategies that the user can choose from. These are the classic merge, the rebase and the cherry picking.

Figure 4.02 shows a schematic representation of the three different merge strategies for the Git SCM. Let’s have a look in detail at what these different strategies are and how they can be used.

– Merge is the best known and most common variant. Here, the last revision of branch B and the last revision of branch A are merged into a new revision C.

– Rebase, is a feature included in SCM Git [9]. Rebase can be understood as a partial commit. This means that for each individual revision in branch B, the corresponding predecessor revision in master branch A is determined and these are merged individually into a new revision in the master. As a consequence, the history of Git is overwritten.

– Cherry picking allows selected revisions from branch A to be transferred to a second branch B.

During my work as a configuration manager, I have experienced in some projects that developers were encouraged to perform every merge as a rebase. Such a procedure is quite critical as Git overwrites the existing history. One effect is that it may could happen that important revisions that represent a release are changed. This means that the reproducibility of Releases is no longer given. This in turn has the consequence that corrections, as discussed in the section branch models, contain additional code, which in turn has to be tested. This of course destroys the possibility of a simple re-test for correction releases and therefore increases the effort involved.

A tried and tested strategy is to perform every merge as a classic merge. If the number of semantic conflicts that have occurred cannot be resolved manually, an attempt should be made to rebase. To ensure that the history is affected as little as possible, the branches should be as short-lived as possible and should not have been created before an existing release.

// preparing

git init -bare

git clone <repository>

touch file_1.txt >> Lorem ipsum dolor sit amet, consectetur adipiscing elit,

git commit -m "main branch add file 1"

touch file_2.txt >> Lorem ipsum dolor sit amet, consectetur adipiscing elit,

git commit -m "main branch add file 2"

touch file_3.txt >> Lorem ipsum dolor sit amet, consectetur adipiscing elit,

git commit -m "main branch add file 3"

touch file_4.txt >> Lorem ipsum dolor sit amet, consectetur adipiscing elit,

git commit -m "main branch add file 4"

touch file_5.txt >> Lorem ipsum dolor sit amet, consectetur adipiscing elit,

git commit -m "main branch add file 5"

// creating a simple history

file_5.txt add new line: sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

git commit -m "main branch edit file 5"

file_4.txt add new line: sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

git commit -m "main branchedit file 4"

file_3.txt extend line: sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

git commit -m "main branch edit file 3"

file_2.txt add new line: sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

git commit -m "main branch edit file 2"

file_1.txt add new line: sed do eiusmod tempor incididunt ut labore et dolore magna aliqua.

git commit -m "main branch edit file 1"

// create a branch from: "main branch add file 5"

git checkout -b develop

file_3.txt add new line: Content added by a develop branch.

git commit -m "develop branch edit file 3."

file_4.txt add new line: Content added by a develop branch.

git commit -m "develop branch edit file 4"

// rebase develop into main

git rebase mainListing 4.01: Demonstration of history change by using rebase.

If this experiment is reproduced, the conflicts resulting from the rebase must be resolved sequentially. Only when all individual sequences have been run through is the rebase completed locally. The experiment thus demonstrates what the term partial commit means, in which each conflict must be resolved for each individual commit. Compared to a simple merge, the rebase allows us to break down an enormous number of merge conflicts into smaller and less complex segments. This can enable us to manually resolve an initially unmanageable number of semantic merge conflicts.

However, this help does not come without additional risks. As Figure 4.03 shows us with the output of the log, the history is overwritten. In the experiment described, a develop branch was created by the same user in which the two files 3 and 4 were changed. The two revisions in the Branch in which files 3 and 4 were edited appeared after the rebase in the history for the main Branch.

If rebase is used excessively and without reflection, this can lead to serious problems. If a rebase overwrites a revision from which a release was created, this release cannot be reproduced again as the original sources have been overwritten. If a correction release is now required for this release for which the sources have been overwritten, this cannot be created without further ado. A simple retest is no longer possible and at least the entire test procedure must be run to ensure that no new errors have been introduced.

We can therefore already formulate an initial assumption at this point. Semantic conflicts that result from merging two objects into a new version very often have their origin in a branch strategy that is too complex. We will examine this hypothesis further in the following chapter, which is dedicated to a detailed exploration of the organization of repositories.

5. Code repository organization and quality gates

The example of Listing 2, which shows how a semantic merge conflict arises, demonstrates the problem at the lowest level of complexity. The same applies to the rebase experiment in Listing 4. If increased the complexity of these examples by involving more users who in turn work in different branches, a statistical correlation can be identified: The higher the number of files in a repository and the more users have write access to this repository, the more likely it is that different users will work on the same file at the same time. This circumstance increases the probability of semantic merge conflicts arising.

This correlation suggests that both the software architecture and the organization of the project in the code repository can also have a decisive influence on the possible occurrence of merge conflicts. Robert C. Martin suggests some problems in his book Clean Architecture [10].

This thesis is underlined by my own many years of project experience, which have shown that software modules that are kept as compact as possible and can be compiled independently of other modules are best managed in their own repository. This also results in smaller teams and therefore fewer people creating several versions of a file at the same time.

This contrasts with the paper “The issue of Monorepo and Polyrepo in large enterprises” [11] by Brousse. It cites various large companies that have opted for one solution or the other. The main motivation for using a Monorepo is the corporate culture. The main aim is to improve internal communication between teams and avoid information silos. In addition, Monorepos bring their own class of challenges, as the example cited from Microsoft shows.

[…] Microsoft scaled Git to handle the largest Git Monorepo in the world […]

Even though Brousse speaks positively about the use of Monorepos in his work, there are only a few companies that really use such a concept successfully. Which in turn raises the question of why other companies have deliberately chosen not to use Monorepos.

If we examine long-term projects that implement an application architecture as a monolith, we find identical problems to those that occur in a Monorepo. In addition to the statistical circumstances described above, which can lead to semantic merge conflicts, there are other aspects such as security and erosion of the architecture.

These problems are countered with a modular architecture of independent components with the loosest possible binding. Microservices are an example of such an architecture. A quote from Simon Brown suggests that many problems in software development can be traced back to Conway’s Law [12].

If you can’t build a monolith, what makes your think microservices are the answer?

This is because components of a monolith can also be seen as independent modules that can be outsourced to their own repository. The following rule has proven to be very practical for organizing the source code in a repository: Never use more than one technology, module, component or standalone context per repository. This results in different sections through a project. We can roughly distinguish between back-end (business logic) and front-end (GUI or presentation logic). However, a distinction by technology or programming language is also very useful. For example, several graphical clients in different technologies can exist for a back-end. This can be an Angular or Vue.js JavaScript web client for the browser or a JavaFX desktop application or an Android Mobile UI.

The elegant implementation of using multiple repositories often fails due to a lack of knowledge about the correct use of repository managers, which manage and provide releases of binary artifacts for projects. Instead of reusing artifacts that have already been created and tested and integrating them into an application in your own project via the dependency mechanism of the build tool, I have observed very unconventional and particularly error-prone integration attempts in my professional career.

The most error-prone integration of different modules into an application that I have experienced was done using so-called externals with SCM Subversion. A repository was created into which the various components were linked. Git uses a similar mechanism called submodules [13]. This decision limited the branch strategy in the project exclusively to the use of the main development branch. The release process was also very limited and required great care. Subsequent supplies for important error corrections proved to be particularly problematic. The use of submodules or externals should therefore be avoided at all costs.

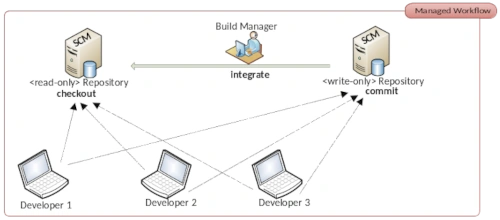

Another point that is influenced by branching and merging are the various workflows for organizing collaboration in SCM systems. A very old and now newly discovered workflow is the so-called Dictatorship Workflow. This has its origins in the open source community and prevents faulty implementations from destroying the code base. The Dictator plays a central role as a gatekeeper. He checks every single commit in the repository and only the commits that meet the quality requirements are included in the main development branch. For very large projects with many contributions, a single person can no longer manage this task. Which is why the so-called Lieutenant was included as an additional instance.

With the open source code hosting platform GitHub, the Dictatorship workflow has experienced a new renaissance and is now called Pull Request. GitLab has tried to establish its own name with the term Merge Request.

This approach is not new in the commercial environment either. The entire architecture of IBM’s Rational Synergy, released in 1990, is based on the principle of the Dictatorship workflow. What has proven to be useful for open source projects has turned out to be more of a bottleneck in the commercial environment. Due to the pressure to provide many features in a project, it can happen that the Pull Requests pile up. This leads to many small branches, which in turn generate an above-average number of merge conflicts due to the accumulation, as the changes made are only made available to the team with a delay. For this reason, workflows such as Pull Requests should also be avoided in a commercial environment. To ensure quality, there are more effective paradigms such as continuous integration, code inspections and refactoring.

Christian Bird from Microsoft Research formulated very clearly in the paper The Effect of Branching Strategies on Software Quality [15] that the branch strategy does have an influence on software quality. The paper also makes many references to the repository organization and the team and organization structure. This section narrows down the context to semantic merge conflicts and reveals how negative effects increase with increasing complexity.

Conflict Sets

The paper “A State-ot-the-Art Survey on Software Merging” [16] written by Tom Mens in 2002 distinguishes between syntactic and semantic merge conflicts. While Mens mainly focuses on syntactic merge conflicts, this paper mainly deals with semantic merge conflicts.

In the practice-oriented specialist literature on topics that deal with Source Control Management as a sub-area of the disciplines of Configuration Management or DevOps, the following principle applies uni sono: keep branches as short-lived as possible or synchronize them as often as possible. This insight has already been taken up in many scientific papers and can also be found in “To Branch or Not to Branch” by Premraj et al [17], among others.

In order to clarify the influence of the branch strategy, I differentiate the branches presented in the section branch models into two categories. Backward-oriented branches, which are referred to below as reverse branches (RB), and forward-oriented branches, which are referred to as forward branches (FB).

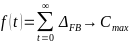

Reverse branches are created after an initial release for subsequent supply. Whereas a release branch, developer branch, pull requests or a feature branch are directed towards the future. Since such forward branches are often long-lived and are processed for at least a few days until they are included in the main development branch, the period in which the main development branch is not synchronized into the forward branch is considered a growth factor for conflicts. As can be expressed as a function over time.

The number of conflicts arising for a forward branch increases significantly if there is a lot of activity in the main development branch and increases with each day in which these changes are not synchronized in the forward branch. The largest possible conflict set between the two branches therefore accumulates over time.

The number of all changes in a reverse branch is limited to the resolution of one error, which considerably limits the number of conflicts that arise. This results in a minimal conflict set for this category. This can be formulated in the following two axioms:

This also explains the practices for pessimistic version control and optimistic version control described by Mens in [16]. I already demonstrated that conflicts can arise even without branches. If the best practices described in this thesis are adopted, there is little reason to introduce practices such as code freeze, feature freeze or branch blocking. This is because all the strategies established from pessimistic version control to deal with semantic merge conflicts only lead to a new type of problem and prevent modern automation concepts in the software development process.

Conclusion

With a view to high automation in DevOps processes, it is important to simplify complex processes as much as possible. Such simplification is achieved through the application of established standards. An important standard is for example semantic versioning, which simplifies the view of releases in the software development process. In the agile context it is also better to talk about production candidates instead of release candidates.

The implementation phase is completed by a release and the resulting artifact is immutable. After a release, a test phase is initiated. The results of this test phase are assigned to the tested release and documented. Only after a defined number of releases, when sufficient functionality has been achieved, is a release initiated that is intended for productive use.

The procedure described in this way significantly simplifies the branch model in the project and allows the best practices suggested in this paper to be easily applied. As a result, the development team has to deal less with semantic merge conflicts. The few conflicts that arise can be easily resolved in a short time.

An important instrument to avoid long-lasting feature branches is to use the design pattern feature flag, also known as feature toggles. In the 2010 article Feature Flags [18], Martin Fowler describes how functionality in a software artifact can be enabled or disabled by a configuration in production use.

The fact that version control is still very important in software development is shown in various chapters in Quio Liang’s book [19] “Continuous Delivery 2.0 – Business leading DevOps Essentials”, published in 2022. In the standard literature for DevOps practitioners, rules for avoiding semantic merge conflicts are usually described very well. This paper shows in detail how conflicts arise and gives an appropriate explanation of why these conflicts occur. With this background knowledge, we can decide whether a merge or a rebase should be performed.

Future Work

Given the many different source control management systems currently available, it would be very helpful to establish a common query language for code repositories. This query language should act as an abstraction layer between the SCM and the client or server. Designed as a Domain Specific Language (DSL). It should not be a copy of well-known query languages such as SQL. In addition to the usual interactions, it would be desirable to find a way to formulate entire processes and define the associated roles.

References

[1] Marc J. Rochkind. 1975. The Source Code Control System (SCCS). IEEE Transactions on Software Engineering. Vol. 1, No. 4, 1975, pp. 364–370. doi: 10.1109/tse.1975.6312866

[2] Nayan B. Ruparelia. 2010. The History of Version Control. ACM SIGSOFT Software Engineering Notes. Vol. 35, 2010, pp. 5-9.

[3] Eugene W. Myers. 1983. An O(ND) Difference Algorithm and Its Variations. Algorithmica 1. 1986. pp. 251–266. https://doi.org/10.1007/BF01840446

[4] Buffenbarger, J. 1995. Syntactic software merging. Lecture Notes in Computer Science. Vol 1005, 1995. doi: 10.1007/3-540-60578-9_14

[5] P. Bourque, R. E. Fairley, 2014, SWEBook v 3.0 – Guide to the Software Engineering Body of Knowledge, IEEE, ISBN: 0-7695-5166-1

[6] V. Driessen, 2023, A successful Git branching model, https://nvie.com/posts/a-successful-git-branching-model/

[7] Tom Preston-Werner, 2023, Semantic Versioning 2.0.0, https://semver.org

[8] Marco Schulz. 2022. Expressions for Source Control Management Systems. American Journal of Software Engineering and Applications. Vol. 11, No. 2, 2022, pp. 22-30. doi: 10.11648/j.ajsea.20221102.11

[9] Git Rebase Documentation, 2023, https://git-scm.com/book/en/v2/Git-Branching-Rebasing

[11] Robert C. Martin, 2018, Clean Architecture, Pearson, ISBN: 0-13-449416-4

[12] Brousse N., 2019, The Issue of Monorepo and Polyrepo in large enterprises. Companion Proceedings of the 3rd International Conference on the Art, Science, and Engineering of Programming. No. 2, 2019, pp. 1-4. doi: 10.1145/3328433.3328435

[13] Melvin E. Conway, 1968, How do committees invent? Datamation. Vol. 14, No. 4, 1968, pp. 28–31.

[14] Git Submodules Documentation, 2023, https://git-scm.com/book/en/v2/Git-Tools-Submodules