The firewall, or firewall, was always a spectacular event in the days of the circus and traveling performers. People or animals would leap through it and be cheered by the crowd. However dramatic such a performance may have seemed to the spectators, the spectacle was quite calculated for the acrobat. After all, we know that fire is one of the most powerful primal elements that humankind has tamed.

In cybersecurity, the firewall is one of the most fundamental protective mechanisms for networked computer systems. This applies to both home computers and mainframes in data centers. However, the idea of igniting one or more rings of fire around a computer is more comparable to a circus spectacle, often melodramatically depicted in movies. Statements like “The first firewall has fallen and the second is already 70% breached” are perfect for the screen but have nothing to do with reality.

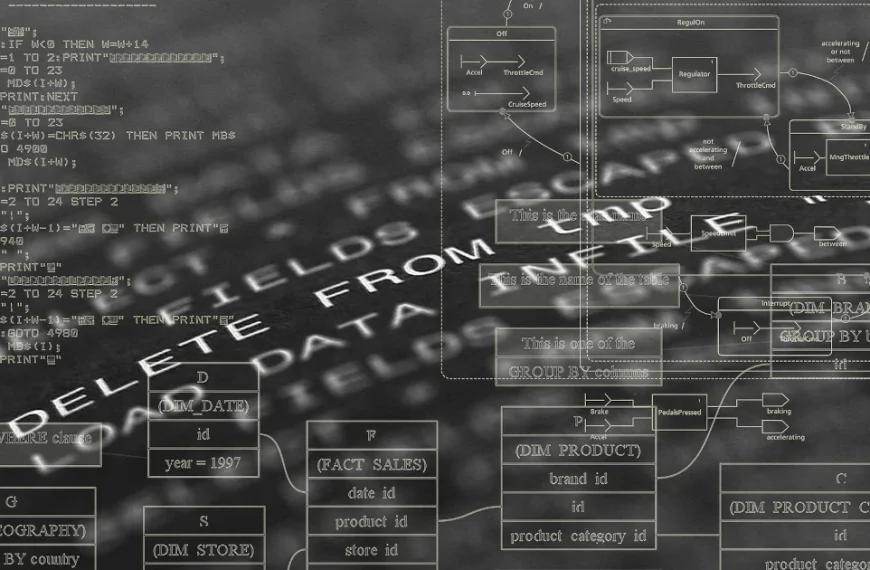

Before we delve into the details, let’s briefly consider how computer systems are connected to form a network. The crucial detail we need is the IP address. In simpler terms, the IP address is the telephone number of the computer or device on the network. To connect to another computer, you need to know its IP address, just like a telephone and its phone number. Once the connection is established, information, or data, can be exchanged between the two devices. This information is broken down into small, manageable packets by the various internet protocols. A protocol is a defined set of rules that all participants must follow. This can easily be compared to sending a letter or package through the mail.

- Write the letter.

- Put the letter in an envelope and seal it.

- Write the recipient’s address on the front of the envelope.

- Write the sender’s address on the back of the envelope.

- Attach a sufficient stamp to the envelope and drop it in the mailbox.

- Write the letter.

Write a … Without knowing the internal workings of the postal service, we can assume that the letter will reach its recipient if we follow the protocol correctly. The same applies to the internet. Depending on the type of data, the computer selects a suitable program that implements the protocol for us. Based on the Internet Protocol (IP), which governs the connection between computers, there are other protocols that handle the data. Well-known protocols include HTTP(s) for websites and FTP for sending files.

Now let’s get to the main topic. What exactly is a firewall and what is it used for? Imagine a very long hallway with countless doors—65,536 doors to be precise. These doors can be opened inwards or outwards. We can therefore move from the hallway to the outside (outgoing traffic) or from the outside into the hallway (incoming traffic).

A Browser Game, (c) mediasinres.tv

These doors are called ports in technical jargon, and they have a fixed number. If you install special programs on your computer that can communicate with other computers, these programs are usually bound to such a port. Here’s a small example: Long before WhatsApp and similar apps, there was Internet Relay Chat, or IRC for short. If you installed IRC on your computer, it was hidden behind port 194. An important characteristic of ports is that if a program is already bound to a port, no other program can use that port.

A firewall allows you to selectively block these gateways to and from the internet. Basically, there are four different options for each gateway:

- Completely blocked,

- Inbound blocked,

- Outbound blocked, and

- Completely open.

Let’s return to our IRC example. If the gateway is completely blocked, we cannot send or receive messages, even though the program can be started on our computer. It cannot establish a connection to the network. If the inbound gateway is blocked, we cannot receive messages, but we can send them. If the outbound gateway is blocked, we can receive messages, but we cannot send any ourselves.

The biggest problem with using firewalls is that they are often not configured correctly. We distinguish between two options here. The most common option is called a blacklist and only regulates the ports specified in the list. Considering that there are 65,536 ports, this can become a very long and unwieldy list. The risk of forgetting something is very high. The advantage of this option is that it is very robust for inexperienced users. The other option is the so-called whitelist. This works in exactly the opposite way to the blacklist. By default, all ports are closed, and the user must explicitly specify which ports are allowed to be opened. As you can easily imagine, operating in whitelist mode requires a certain amount of user experience. You have to know which port belongs to which program and how to enter these rules into the firewall.

As we can see, the image of drawing a ring of fire around the computer is not a suitable way to visualize how a firewall works. Once the door—that is, on the computer—is blocked, installing another firewall on the computer makes little sense. In this case, the saying “two is better than one” doesn’t apply.

Attacks on firewalls typically involve searching for open ports and then exploiting them. This is done using so-called port scanners. Anyone wanting to try out such a port scanner shouldn’t do so without authorization. Searching for open ports on other people’s computers is already a criminal offense in Germany and many other parts of the world.

Another, very advanced attack scenario involves attacking the firewall program itself. Here, the aim is to find and exploit any existing programming errors in the firewall.

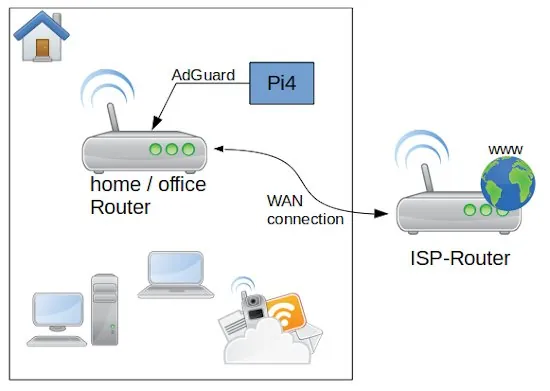

Firewalls are available for every operating system in a wide variety of forms. Professional network devices such as routers and switches may also have integrated firewalls. In this case, the router acts as a network computer and protects all devices connected to it. Before deciding on a specific program, you should find out that it is as easy to use as possible and comes from a reputable manufacturer.

List (incomplete) of the most well-known standard ports:

| Portnummer | Servicename | Beschreibung |

|---|---|---|

| 21 | FTP | File Transfer Protocol |

| 22 | SSH-SCP | Secure Shell |

| 23 | Telnet | Telnet protocol |

| 25 | SMTP | Simple Mail Transfer Protocol |

| 53 | DNS | Domain Name System |

| 80 | HTTP | Hypertext Transfer Protocol (HTTP) |

| 110 | POP3 | Post Office Protocol v. 3 |

| 143 | IMAP4 | Internet Message Access Protocol v. 4 |

| 443 | HTTP over SSL | Hypertext Transfer Protocol Secure (HTTPS) |

| 465 | SMTP over TLS/SSL, SSM | Authenticated SMTP over TLS/SSL (SMTPS) |

| 587 | SMTP | Email message submission |

| 993 | IMAP4 over SSL | Internet Message Access Protocol |

| 995 | POP3 over SSL | Post Office Protocol 3 |

| 1194 | OpenVPN | OpenVPN |

| 1725 | Steam | Valve Steam Client uses port 1725 |

| 2967 | Symantec AV | Symantec System Center |

| 3074 | XBOX Live | Xbox LIVE and Games for Windows |

| 3306 | MySQL | MySQL database system |

| 3724 | World of Warcraft | Some Blizzard games |