The prophecy that programmers will become obsolete because computers will essentially program themselves is now several decades old. So far, however, the profession of programmer hasn’t died out. Nevertheless, some fundamental changes have occurred in recent years. The capabilities of current AI systems evoke a wide range of emotions. Some hate it, others love it. However, as is so often the case in life, things aren’t black and white. Therefore, I would like to share my experiences with AI-supported programming and offer an assessment of the overall situation.

The development is exponential. Roughly speaking, performance doubles with each leap in half the time compared to the previous leap.

We are currently in the third iteration. The next iteration, with double the performance, will no longer take 18 months, but a maximum of 9 months. My key takeaway for software development is this: AI can massively support skilled programmers and administrators in their work and significantly boost their performance. However, like everything in life, this also has its downsides. In this article, I’ll take the time to shed some light on the background of this topic.

Some time ago, I kept seeing posts on my timeline on the relevant social media platforms from junior developers raving about vibe coding. At first, I thought it was about creating the optimal atmosphere for working—things like the right music and essential oils to get into the perfect workflow. But no. That wasn’t what it was about. People who knew nothing about programming could suddenly generate code that seemingly did exactly what the authors intended. Sounds great at first, but the reality is quite different.

We’ve been familiar with the “copy-paste” approach for quite some time. We didn’t need AI for that; it wasn’t so long ago that people would Google code snippets and find them on websites like Stack Overflow. Fragments of supposed recommendations were quickly copied into their own codebases, and if it worked, everything was left unchecked, exactly as it had been copied. These self-proclaimed experts weren’t even able to understand the copied code snippets, let alone adapt them correctly to their own projects. Hence the expression “copy-pastes-along.” The fact that these code snippets could cause massive problems in production environments was conveniently ignored by these supposed experts. The spectrum of issues ranged from poor performance to critical security vulnerabilities. This situation hasn’t changed with the widespread availability of AI. Therefore, I predict that in the coming years, a flood of low-quality software will compete for users’ attention.

Here I can only quote Grady Booch again: “A fool with a tool is still a fool.” My observations of using LLM for programming in my own projects have been rather lukewarm. In my experience, it’s mostly project managers and people who can’t program who massively overestimate the capabilities of AI models on social media.

I’m generally a skeptical person and, of course, I’ve tried using the usual suspects—AI models—for my daily work. I specifically looked at the community-created versions, without paying for them. Because with these versions, the world will be flooded with bad software in the future. Here, too, I can cut to the chase. All of Grok’s results in the areas of programming/scripting and configuration were below average. It felt a bit like being in an old forum. Instead of asking those annoying “why” and “how come” questions, Grok failed to get to the point, let alone present a working solution. The model, however, shone with meaningless motivational slogans like “Team leader on steroids.” It reminds me a bit of Joseph Weizenbaum’s statements about virtual conversations and his Eliza chatbot.

Things went somewhat better with Deep Seek. At least it produced usable results. These were also immediately usable and seemingly did what they were intended to do. However, upon closer inspection of the code, it was cluttered with all sorts of unnecessary elements. In these cases, I didn’t conduct any further analysis to determine whether any security-critical issues had arisen. Statistically, one can assume that the more code there is, the higher the probability of errors. Opus, on the other hand, constantly annoyed me by requiring a subscription even for minimal queries. I actually achieved the best results with ChatGPT, although the answers were sometimes contradictory or redundant.

Anyone considering setting up a local instance of one of the free AI models, for example with LM Studio, and buying an exorbitantly expensive graphics card for it, should know: you can save your money. The freely available models are nowhere near as powerful as their commercial counterparts. It also wouldn’t exactly be good for business to create your own competition. The question then arises: when does it actually make sense to work with AI programming models to truly accelerate your output? In my experience, it’s less about what or with what, but more about how. For this, we need to make a few important distinctions.

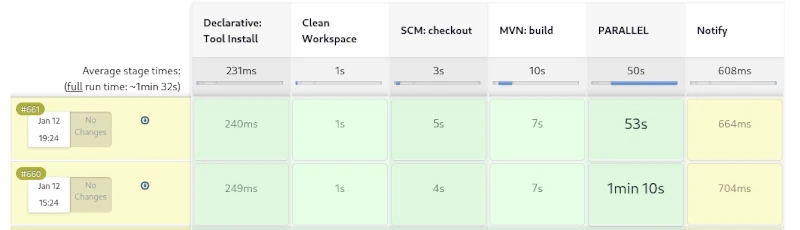

An AI agent that is directly integrated into the IDE and has complete freedom is not a good idea. You often hear that this AI does things it shouldn’t, and instructions to stop these activities have little effect. Anyone who still insists on trying it is well advised to establish a clean branching model with appropriate access restrictions for the agent. Although I generally reject pull requests in commercial development teams, this strategy is essential when using AI agents. Access to the build logic, such as the Maven POM or Gradle project file, is also forbidden for the agents. The proven security approach applies here as well: as little as possible, as much as necessary. Locking down the build logic prevents the AI agent from arbitrarily defining its own version of dependencies.

It’s also important to ensure that code changes remain manageable and are implemented iteratively. Although it might seem a bit clunky, I use AI to generate functions or classes. I then copy the suggested code snippets into my IDE and review them line by line. Based on my quality criteria, I modify the code and use custom test cases to verify that everything works as intended. Generating extensive test data for late tests is an ideal example of tasks that can and should be delegated to AI. Of course, it’s essential to continuously monitor test quality, for which test coverage is a key indicator. Even though the approach described above takes a bit more time, it offers more advantages over quick fixes. I’m able to understand the code changes and assign them to the relevant requirements. Another significant factor is that this method helps me further develop my programming skills. Quickly skimming and unreflectively accepting the proposed solution will likely cause my skills to atrophy over time, leading to a continuous decline in my performance. This will not secure my job in the long run.

This brings me to another point regarding working with LLM: How can you formulate efficient prompts, i.e., instructions for the model? Since communication with the model occurs via natural language, it’s essential to structure your thoughts effectively in order to articulate them clearly. Therefore, taking a course in prompt engineering is not helpful. If you can’t clearly communicate your ideas and concepts to others, you’ll achieve little success with AI. So, what really matters? The answer is almost so simple it’s easy to miss: clear communication with concise, short, and understandable sentences. No complicated, convoluted sentences to satisfy your ego. Of course, you also need a concrete—fully thought-out—idea of what you expect. Vague formulations can leave (too much) room for interpretation. Anyone who can explain their intentions to a preschooler in a few minutes will also achieve good results with AI. I’d like to leave it at that and discuss another aspect.

I’m often asked how I assess the quality of the source code generated by LLM. The answer isn’t straightforward, as there are various criteria to consider. UI is a whole other story. UI/UX is subject to trends and changes more frequently than business logic. In my Java test automation training courses, I strongly advise against creating UI tests altogether. The reason is that the cost-benefit ratio simply isn’t balanced enough in this area. For generated UI code, this means I only look at functionality and appearance and leave it at that. The situation is completely different with business logic for backend systems. Here, I’ve found that the code produced by LLM is sometimes better in terms of security than that of many programmers. The usual checks, such as SQL parameters, input validation, and filtering, are considered and implemented. However, there’s still room for improvement in performance and readability/understandability. I expect significant improvements in these areas in about two more iterations. This is also a key reason why LLM optimizations of an existing codebase are never truly complete and should be repeated with each new generation of LLM.

My strongest criticism of companies, as well as developers and administrators, who excessively use LLM in their daily projects is that they could quickly lose control of their products/services. The entire issue cannot be categorized as black and white, because the range of nuances is too vast. Therefore, it is up to us to follow the motto of the literary Enlightenment, as exemplified by Immanuel Kant: “Have the courage to use your own understanding.”

Finally, I’d like to discuss the cost factor for high-performance AI models. This is where unpleasant surprises can quickly arise. Let’s assume we have someone with a great startup idea who also has the ability to formulate correct and meaningful requirements clearly. Ideally, they even possess rudimentary programming skills to read, understand, and easily modify source code. This person decides to implement the idea independently, without a programmer. Even if the project is broken down into smaller parts and these tasks are assigned to freelancers, several thousand euros can quickly accumulate, depending on the scope of the work packages. If these tasks are then distributed to AI agents, the usual rates of 20 to 50 euros per month no longer apply. Token-based billing becomes necessary. Depending on the scope of the prompt, a request to the AI then consumes one or more tokens. One token often has a value of one euro/US dollar. If no limit is set, several thousand euros can be consumed in just a few hours. Furthermore, it’s impossible to predict the quality of the generated source code beforehand. Every improvement requires tokens, which must be paid for – a cost factor that doesn’t arise with human developers. Even though AI agents might not seem to incur social security or similar expenses at first glance, this doesn’t mean projects can be implemented more cheaply. What’s more important is having someone on board who knows how to structure source code so that it can be easily extended later.